Introduction

The development of laser technology over 50 years ago led to the creation of light detection and ranging (LIDAR) systems that delivered a breakthrough in the way distances are calculated. The principles of LIDAR are much the same as those used by radar. The key difference is that radar systems detect radio waves that are reflected by objects while LIDAR uses laser signals. Both techniques usually employ the same type of time of flight method to determine an object's distance. However, as the wavelength of laser light is much shorter than that of radio waves, LIDAR systems deliver superior measurement accuracy. LIDAR systems can also examine other properties of the reflected light, such as the frequency content or polarization, to reveal additional information about the object.

The development of laser technology over 50 years ago led to the creation of light detection and ranging (LIDAR) systems that delivered a breakthrough in the way distances are calculated. The principles of LIDAR are much the same as those used by radar. The key difference is that radar systems detect radio waves that are reflected by objects while LIDAR uses laser signals. Both techniques usually employ the same type of time of flight method to determine an object's distance. However, as the wavelength of laser light is much shorter than that of radio waves, LIDAR systems deliver superior measurement accuracy. LIDAR systems can also examine other properties of the reflected light, such as the frequency content or polarization, to reveal additional information about the object.

LIDAR systems are now being employed in an ever-increasing variety of applications. This includes, but is not limited to, autonomous driving, geological and geographical mapping, seismology, meteorology, atmospheric physics, surveillance, altimetry, forestry, navigation, vehicle tracking, surveying, and environmental protection.

Lidar Configuration

To match the many different applications, LIDAR systems come in a wide range of designs and configurations. Each system requires suitable optical to electrical sensors and appropriate data acquisition electronics. The light detection system is either incoherent, where direct energy is measured by amplitude changes in the reflected signals, or coherent, where shifts in the reflected signal's frequency, such as those caused by a Doppler Effect, or its phase are observed. Similarly the light source can be a low power micro-pulse design, where intermittent pulse trains are transmitted, or a high energy one. Micro-pulse systems are ideal for applications where "eye-safe" operation is essential (such as in surveying and ground based vehicle tracking), while the high-energy systems are typically deployed where long distances and low-level reflections are to be encountered (like in atmospheric physics and meteorology studies).

Each LIDAR system needs to use an appropriate sensor to detect the reflected laser signals and convert them into an electrical signal. The most common sensor types are photomultiplier tubes (PMT's) and solid-state photodetectors (such as photodiodes). In general, PMT's are used in applications where visible light is employed while photodiodes are more common in infrared systems. However, both sensor types are widely used and the choice depends largely on the light characteristics that need to be detected, the performance level required and the cost.

Each LIDAR system needs to use an appropriate sensor to detect the reflected laser signals and convert them into an electrical signal. The most common sensor types are photomultiplier tubes (PMT's) and solid-state photodetectors (such as photodiodes). In general, PMT's are used in applications where visible light is employed while photodiodes are more common in infrared systems. However, both sensor types are widely used and the choice depends largely on the light characteristics that need to be detected, the performance level required and the cost.

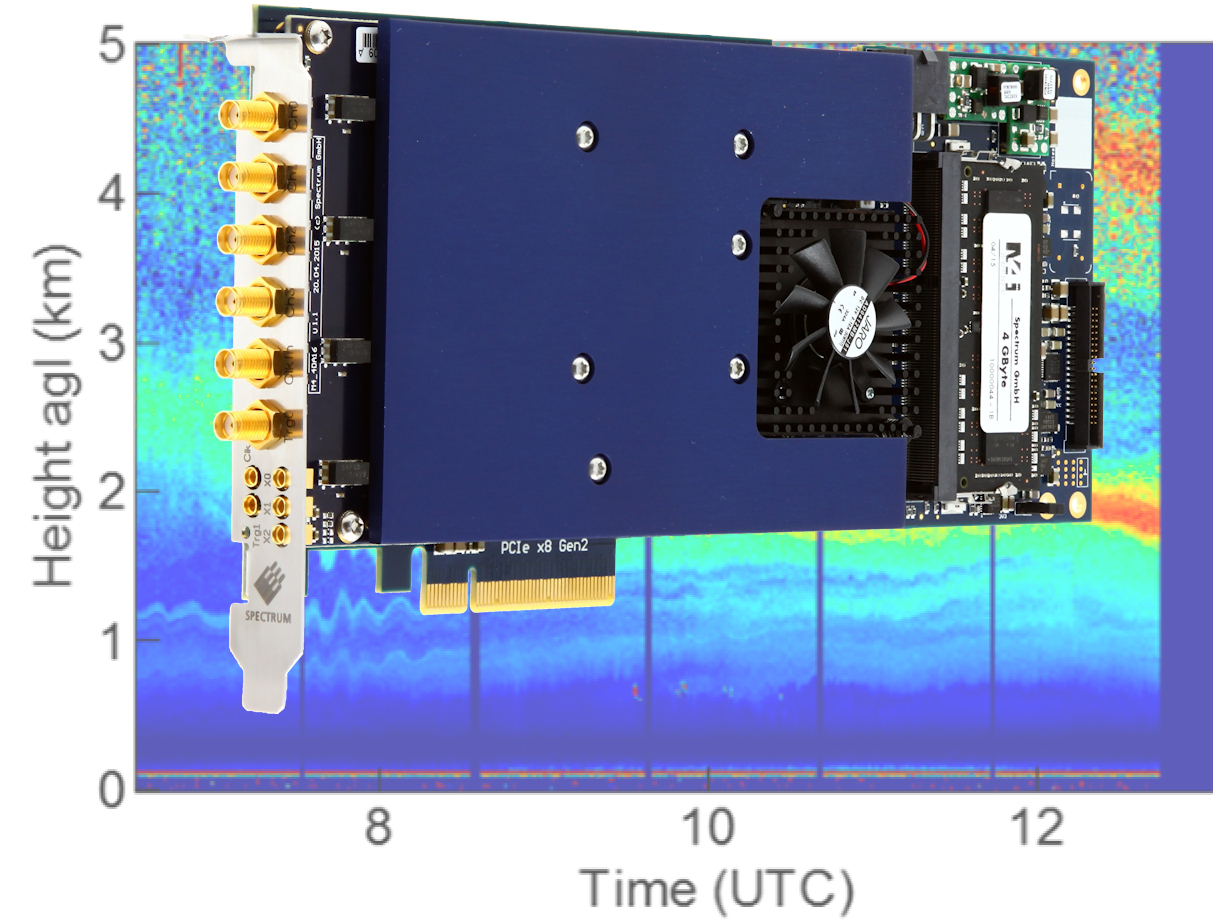

Most importantly the sensors produce fast electrical signals that need to be acquired and analyzed. For the majority of LIDAR applications, the most popular form factor for a signal capture card is PCIe as this enables them to be installed directly inside most modern PCs. PCIe is a form factor supplied my many digitizer vendors. It's a simple way to create a powerful, easy-to-use, data acquisition system. As the PCIe bus delivers very high data throughput rates, the functions of signal acquisition, data transfer and analysis are typically much faster than those of other, more conventional, acquisition systems. Some vendors like Spectrum Instrumentation also supply industry standards such as the digitizerNETBOX, a compact LXI/Ethernet-based device or PXIe, which are a good choice for moving environments with space restrictions or vibration issues such as in airborne or mobile LIDAR.

LIDAR performance classes

For LIDAR applications, there are three separate performance classes:

For the fastest light pulses

For the capture and analysis of very fast signals, a card would need sampling rates up to 10 GS/s and a high bandwidth of more than 1 GHz. An example for such a digitizer is the Spectrum Instrumentation M5i.33xx series which offers up to 2 channels per card on PCIe platforms with 12 bit resolution and up to 6.4 GS/s sampling rate. THe M4i.22xx series offers up to 4 channels per card on PCIe and PXIe platforms or up to 24 channels on an LXI platform. This combination makes the cards ideal for working with fast sensors that produce pulses that are in the nanosecond or even sub-nanosecond ranges. Furthermore, a fast 5 GS/s sampling rate enables timing measurements with sub-nanosecond resolution. It's ideal for situations where small frequency shifts, such as those produced by a Doppler Effect, need to be detected and measured.

For low level signals and high sensitivity

When wide signal dynamic range and very high sensitivity is needed, a card needs to be able to acquire signals with amplitudes that go down into the millivolt range with sampling rates of a few hundred MS/s and a matching bandwidth. Vertical resolution needs to be high, 16 bits are preferred. An example is the Spectrum M4i.44xx series with 14-bit resolution at 500 MS/s or 16-bit resolution at 250 MS/s. These units also have programmable full scale gain ranges, from ±200 mV to ±10 V, making them suitable for applications where low-level signals and small amplitude variations need to be observed and measured.

For cost effective mid-range performance

The third group is for applications that need high sensitivity but have less demanding timing requirements. Sampling Rates of up to 100 MS/s and 16-bit vertical resolution, like Spectrum's M2p.59xx series offers, fit into this application area. These units are used in long-range LIDAR applications where high signal sensitivity is essential and also for situations where high-density, multi-channel recording is required.

Advanced digitizer features to look for in LIDAR applications

Digitizers include a number of different acquisition modes that enable the efficient use of the digitizer's on-board memory and deliver ultrafast triggering capabilities, so that no important events are missed. These modes include Multi- and Gated- acquisitions, complete with time stamping, FIFO streaming or FPGA-based high speed Block Averaging.

How to handle the huge amounts of data

The first method simply sends the data to the CPU of a host PC. This conventional approach provides an easy solution. Users can write their own analysis programs based on the vendor's API, or use third party measurement software like SBench 6, MATLAB and LabVIEW.

The overall performance and measurement speed is then limited by the CPU's available resources. In demanding applications, it is a problem, as the CPU shares its processing power with the rest of the PC system, as well as controlling the data transfer.

The second approach uses FPGA technology - Field Programmable Gate Array. This is a powerful solution but it comes with a much higher cost and complexity. Large FPGAs are expensive and, creating custom firmware, requires an FDK for the digitizer, tools from the FPGA vendor, and specialized hardware programming engineering skill.

Creating firmware isn't for everyone and even experienced developers can get bogged down in long development cycles. Furthermore, the solution is limited by the FPGA that is actually on the digitizer. For example, if the available block RAM is exhausted, there's nothing more that can be done.

The third approach, created by Spectrum Instrumentation, is new. Called SCAPP, it uses a standard off-the-shelf GPU (Graphics Processor Unit) that is based on Nvidia's CUDA standard. The GPU connects directly with the digitizer without CPU interaction. This opens up the huge parallel core architecture of the GPU for signal processing with hundreds or even thousands of processing cores, memories of several Gigabytes and calculation speeds of up to 12 Tera-FLOP. The structure of a CUDA card works perfectly for analysis as it is designed for parallel data processing. This makes it ideal for tasks such as data conversion, digital filtering, averaging, baseline suppression, FFT window functions or even FFTs themselves as they are easily handled in parallel. For example, a small GPU with 1k cores and 3.0 Tera-FLOP calculating speed is already capable of doing continuous data conversion, multiplexing, windowing, FFT and averaging at 500 Mega Samples per Second on two channels with an FFT block size of 512k and it can run for hours.

The third approach, created by Spectrum Instrumentation, is new. Called SCAPP, it uses a standard off-the-shelf GPU (Graphics Processor Unit) that is based on Nvidia's CUDA standard. The GPU connects directly with the digitizer without CPU interaction. This opens up the huge parallel core architecture of the GPU for signal processing with hundreds or even thousands of processing cores, memories of several Gigabytes and calculation speeds of up to 12 Tera-FLOP. The structure of a CUDA card works perfectly for analysis as it is designed for parallel data processing. This makes it ideal for tasks such as data conversion, digital filtering, averaging, baseline suppression, FFT window functions or even FFTs themselves as they are easily handled in parallel. For example, a small GPU with 1k cores and 3.0 Tera-FLOP calculating speed is already capable of doing continuous data conversion, multiplexing, windowing, FFT and averaging at 500 Mega Samples per Second on two channels with an FFT block size of 512k and it can run for hours.

Comparing the SCAPP approach to an FPGA-based solution reveals a big saving in the Total Cost of Ownership. All that is required is a matching CUDA GPU and the software development kit. However, the largest cost saver is project development time. Instead of spending weeks trying to understand a supplier's FDK, the structure of the FPGA firmware, the FPGA design suite and the simulation tools, the user can start immediately by working with examples written in easy-to-understand C-Code and using common design tools.

Download

- Article as PDF (english)